Welcome to My Homepage

I received my M.Sc. in Computer Science from Western University under the supervision of Prof. Pingzhao Hu.

During my M.Sc., I published three first-author papers at NeurIPS, ICLR, and ICML on structure-grounded multimodal reasoning.

I am seeking RA and PhD opportunities in world models, agents, multimodal reasoning, and post-training. [See My CV Here]

📝 Selected Publications

🎓 Top-Tier Conferences During My Master’s

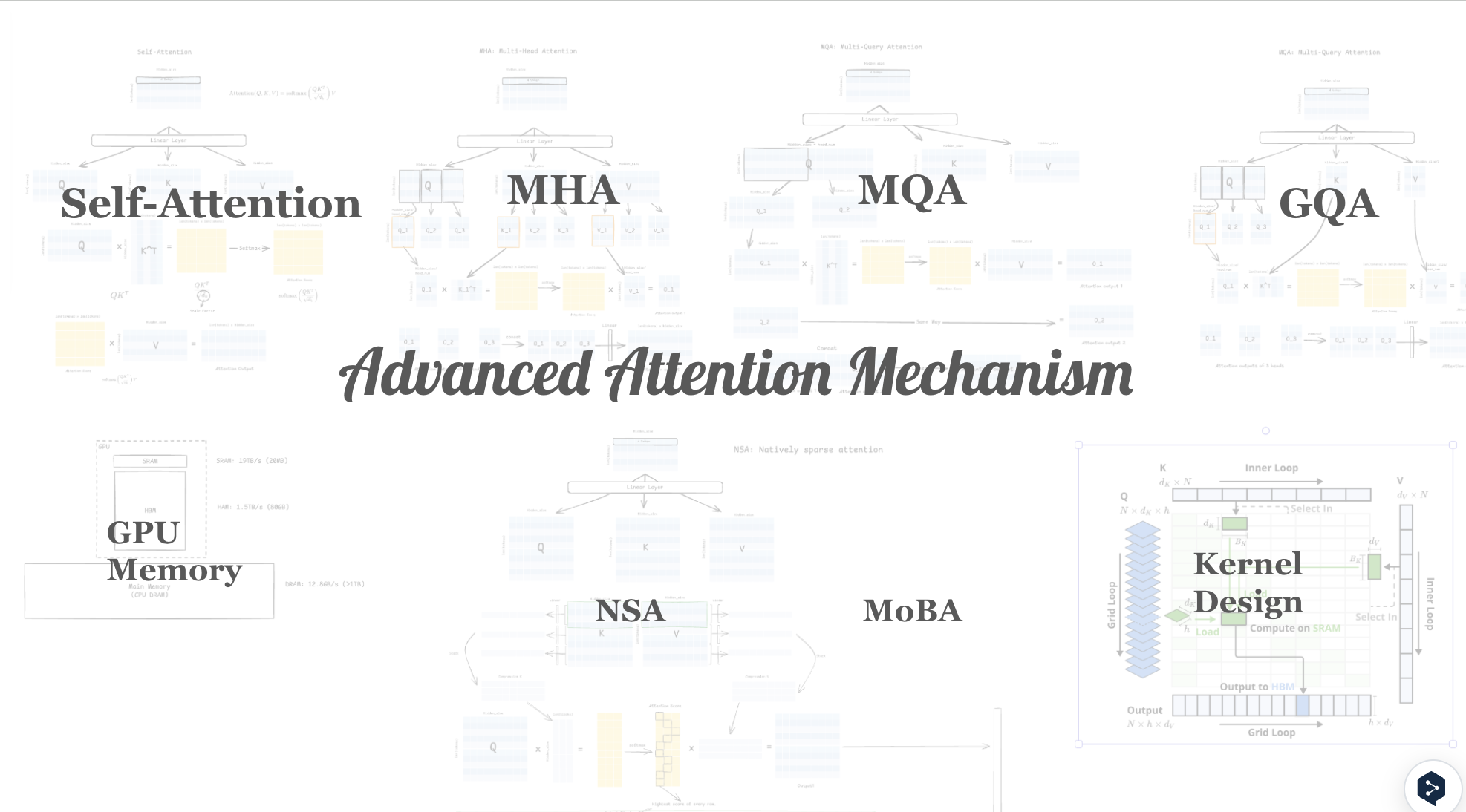

? How can LLMs reason over structure at scale?

Scaling-Aware Adapter for Structure-Grounded LLM Reasoning [Code]

Zihao Jing, Qiuhao Zeng, Ruiyi Fang, Yan Yi Li, Yan Sun, Boyu Wang, Pingzhao Hu

Proposed scaling-aware patching and a geometry-grounding adapter for structure-grounded LLM reasoning over variable-size spatial graphs. Achieved top-1 performance on 17/18 reasoning tasks from Mol-Instruction, RNA-QA, and DNA-Chat benchmarks.

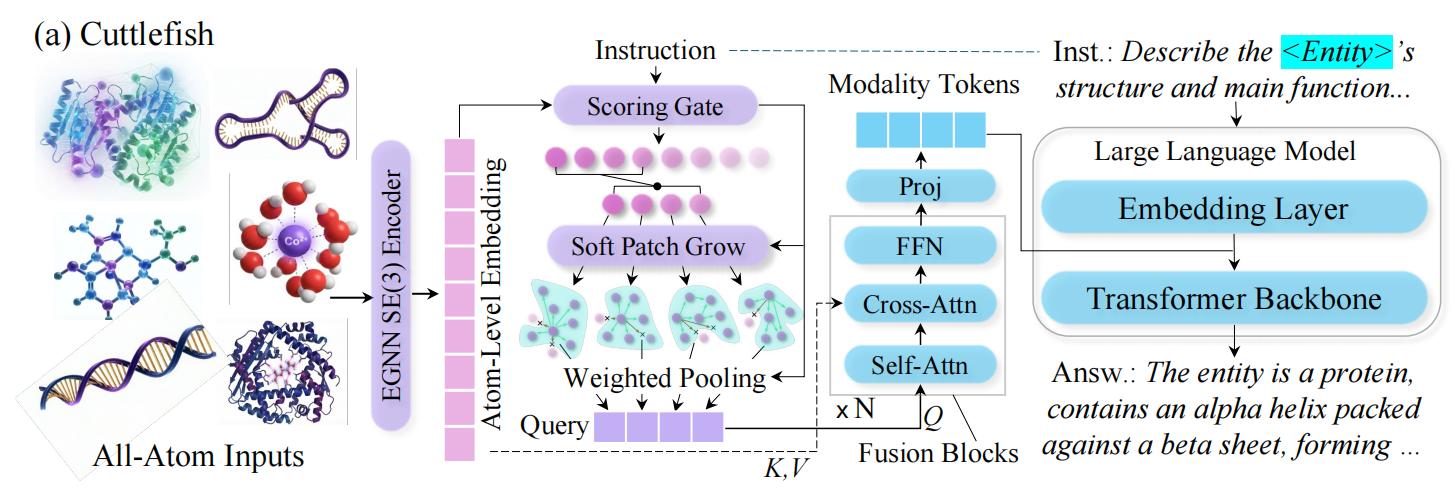

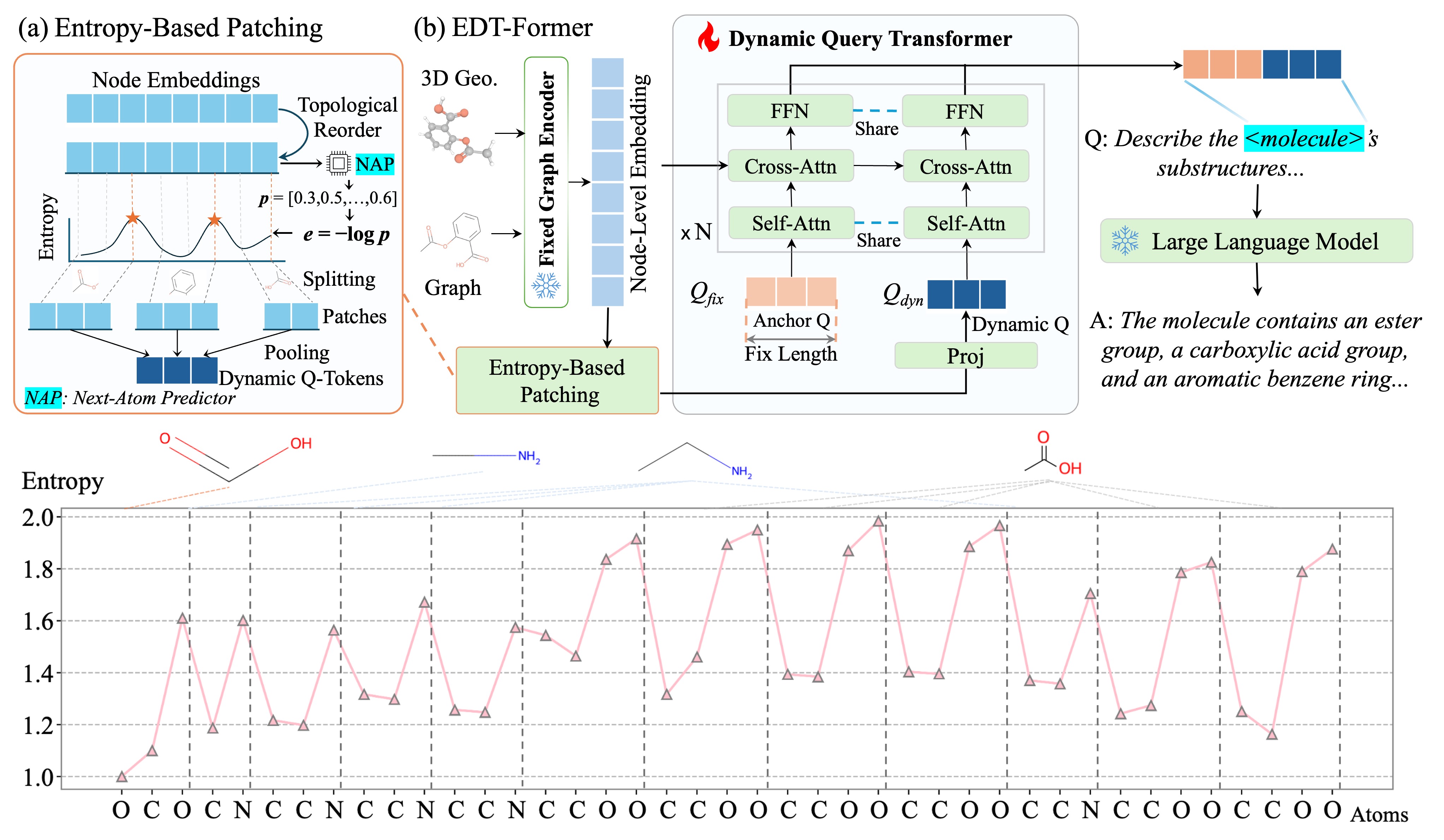

? How can LLMs understand 2D/3D structure, e.g., molecules?

Entropy-Guided Dynamic Tokens for Graph-LLM Alignment in Molecular Understanding [Code] [Video]

Zihao Jing, Qiuhao Zeng, Ruiyi Fang, Yan Sun, Boyu Wang, Pingzhao Hu

Challenges: Current approach (1) compress graph into fixed-length tokens (Q-Former style), discarding fine-grained context for larger entities; (2) depend on heavy end-to-end tuning.

Our solutions: (1) Entropy-Guided Patching allocates dynamic tokens for informative regions based on entropy, preserving salient relations while avoiding over-compression, (2) enabling connector-only alignment training. SOTA on 9/10 molecular tasks and 7/7 Mol-Instruction benchmarks.

? How can foundation transformers better embed 1D–3D structures?

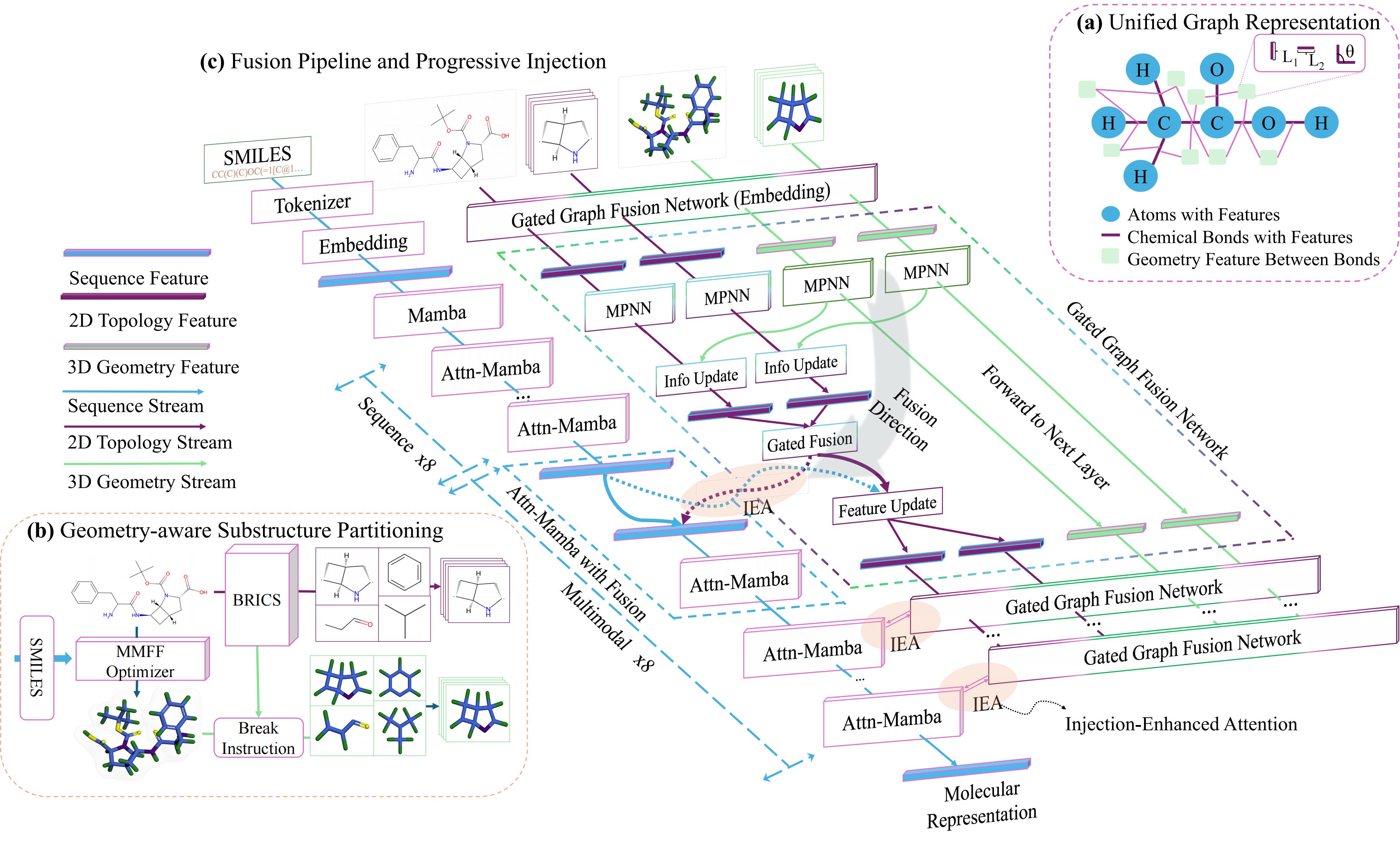

Structure-Aware Fusion with Progressive Injection for Multimodal Molecular Representation Learning [Code] [Video&Poster]

Zihao Jing, Yan Sun, Yan Yi Li, Sugitha Janarthanan, Alana Deng, Pingzhao Hu

Challenges: Molecular modeling suffers from (1) the same entity can yield inconsistent 3D inputs, destabilizing representations; (2) naive fusion: early, symmetric mixing lets noisy 3D dominate (modality collapse).

Our solutions: (1) Structured Fusion Pipeline aligns 2D/3D into a stable prior, reducing 3D sensitivity. (2) Progressive Injection asymmetrically injects this prior into the main stream, preventing fusion collapse.

🎓 Additional Work and Co-authored Publications

-

Junqin Huang, Zhongjie Hu, Zihao Jing, Mengya Gao, Yichao Wu. Piccolo2: General Text Embedding with Multi-Task Hybrid Loss Training. SenseTime Technical Report, 2024.

-

Zihao Jing, Yuxi Long, Ganlin Feng. Pruning for Generalization: A Transfer-Oriented Spatiotemporal Graph Framework. Preprint.

-

Qiuhao Zeng, Jerry Huang, Peng Lu, Ruiyi Fang, Gezheng Xu, Zihao Jing, Yufei Cui, Charles Ling, Gang Niu, Boyu Wang. Attention with Routed-Memory for Learnable Sparse Control. ICML 2026.

-

Ruiyi Fang, Jingyu Zhao, Shuo Wang, Ruizhi Pu, Bingheng Li, Jiale Cai, Zhihao Li, Zihao Jing, Jian Zhu, Song Tang, et al. SAGA: Structural Aggregation Guided Alignment with Dynamic View and Neighborhood Order Selection for Multiview Graph Domain Adaptation. ICLR 2026.

-

Alana Deng, Sugitha Janarthanan, Yan Sun, Zihao Jing, Pingzhao Hu. Distilling and Adapting: A Topology-Aware Framework for Zero-Shot Interaction Prediction in Multiplex Biological Networks. ICLR 2026.

For a complete list of publications, see my Google Scholar.

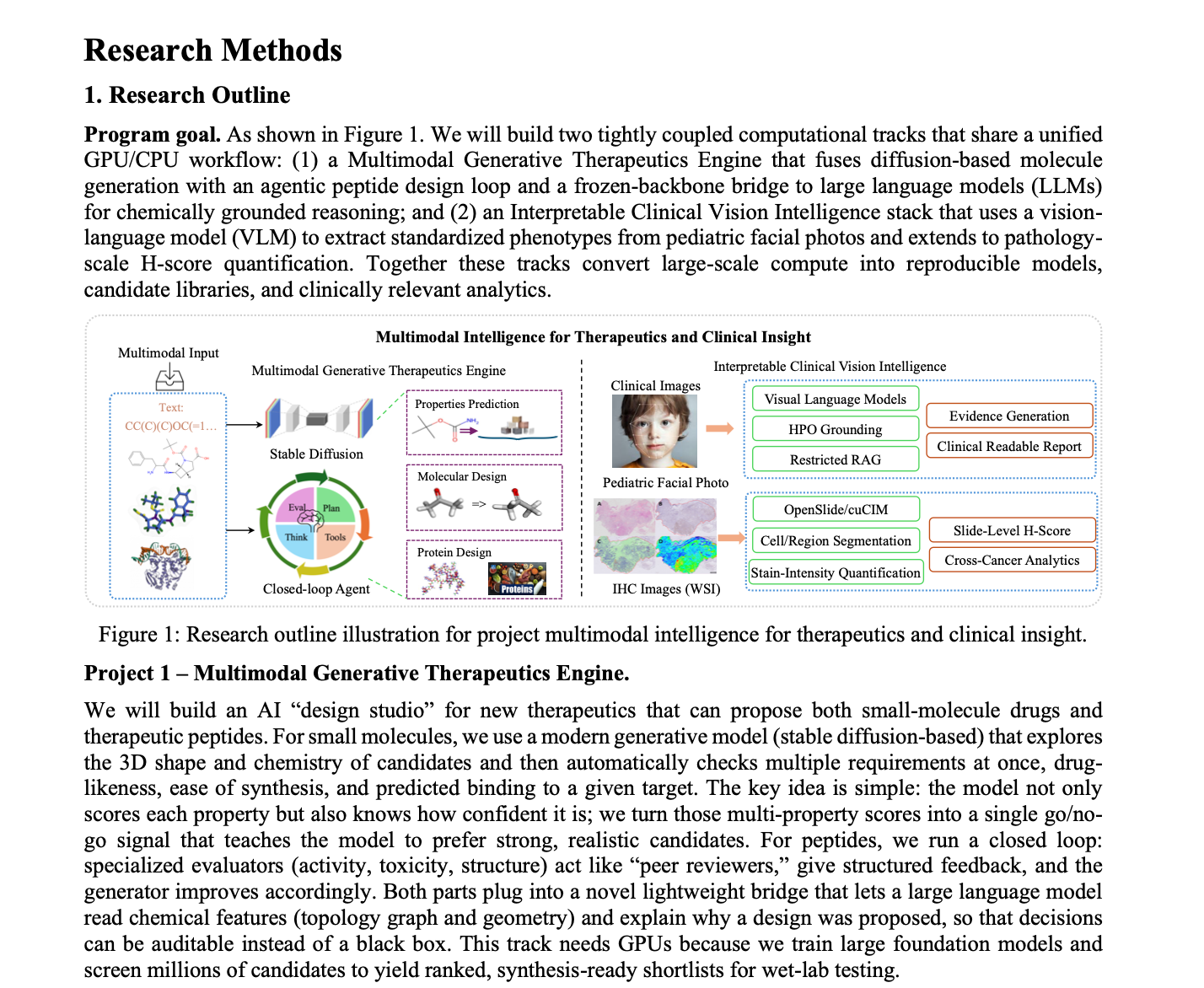

📄 Research Funding and Grant Contributions

Received 25 RGU-years (Reference GPU Unit-Years, equivalent to 5×A100-80GB GPU-years) on Canada’s national supercomputing cluster for our lab via the Digital Research Alliance of Canada, Resources for Research Groups 2026 grant; estimated commercial rental value: ~US$80K.

📖 Education

- 2024.09 - 2026.04, MSc of Computer Science, Western University. London, Canada.

- 2019.09 - 2024.06, Bachelor of Software Engineering, Beihang University. Beijing, China.

💻 Internships

SenseTime - LLM Research Intern | 2023.09–2024.06

- Text embeddings (Piccolo2): Trained general-purpose embedding models with multi-task hybrid-loss objectives; built end-to-end training/evaluation pipelines and led iterative optimization of a generative embedding LLM; achieved top-1 ranking on C-MTEB (May 2024).

- Domain LLM adaptation (100B): Fine-tuned a 100B-parameter LLM for vertical livestream marketing; drove data/recipe iteration and productionized the model for deployment at Sina Weibo.

- LLM research, engineering & scaling: Gained hands-on experience with large-scale pretraining/fine-tuning codebases (SenseNova series), hyperparameter tuning, experiment tracking, and reproducible training workflows on multi-GPU infrastructure.

Jina AI - AI Research Intern | 2023.04–2023.09

- LLM engineering: Improved RunGPT interface and contributed solutions to the Llama open-source ecosystem.

- Applied LLM: Implemented LLM-based denoising and sentiment pipeline for Budweiser public-opinion analysis; reduced operational cost by >13%.

- Model commercialization: Led evaluation/tuning of a super-resolution model; executed performance testing and produced pricing recommendations.

🎤 Selected Talks

👁 Academic Service

Conference Reviewer ICLR 2026 (5 papers), ICML 2026 (6 papers)

Workshop Reviewer Time Series in the Age of Large Models (TSALM), ICLR 2026 (2 papers)

Journal Reviewer ACM Transactions on Knowledge Discovery from Data (TKDD)

Journal Reviewer IEEE Transactions on Neural Networks and Learning Systems (TNNLS)

Conference Volunteer NeurIPS 2025, San Diego Convention Center

🧑🏫 Mentorship

Teaching Assistant, COMPSCI 2211 Software Tools and Systems Programming | Fall 2024 and Fall 2025

Teaching Assistant, COMPSCI 3305 Operating Systems | Winter 2025 and Winter 2026

Mentored junior students on operating systems, software tools, Linux workflows, and research coding practices.

📈 Technical Skills

- LLMs: pretraining, post-training, multimodal alignment, and agent workflow design.

- Systems and HPC: Linux, Slurm, Docker, Singularity, Conda/Mamba, Git, and reproducible environments.

- Distributed Training: PyTorch DDP/FSDP, DeepSpeed, multi-GPU/node training, profiling, and monitoring.

- Programming: Python, C/C++, Java, MATLAB, SQL, Bash, JavaScript, and full-stack development.

🎖 Honors and Awards

- 2023 Silver Prize of Feng Ru Cup Science and Innovation Competition (University-level).

- 2021 Third Prize, 13th National Undergraduate Mathematics Competition (National-level).

- 2021 Third Prize, 32nd Beijing Undergraduate Mathematics Competition (Province-level).

- 2021 Outstanding Student Leader Award (University-Level).

- 2021 Third Prize in Physics Academic Competition (University-Level).

- 2020 H Prize in the Mathematical Contest In Modeling.

👨🏫 Research Values from My Mentors

- Prof. Pingzhao Hu, Western University: “Write a paper such that reviewers neither can nor need to ask questions.”

- Prof. Boyu Wang, Western University: “Read 20+ related papers before discussing ideas with me.”

- Yifu Ding, PhD in CS, Beihang University: “A paper should present clear, well-aligned challenges and novelties.”

- Qiuhao Zeng, Postdoc in CS, University of Toronto: “If you dig one direction deeper, you will always uncover novelty.”